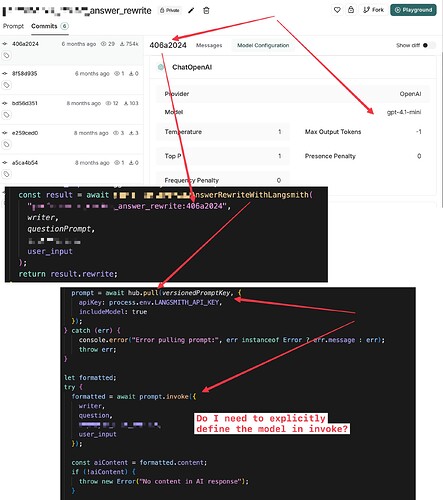

I can’t figure out why tracing reports the model used is gpt-3.5-turbo when my prompt seems to be configured to utilize gpt-4.1-mini.

I’m calling hub.pull in JS with .invoke. Langsmith prompt config and langsmith prompt calling code is in the screenshot. I’m guessing I need to explicitly define the model when calling invoke?

(first screenshot of setup and code, 2nd screenshot of trace ouput from the run, showing model gpt-3.5-turbo)